Do NOT follow this link or you will be banned from the site!

Feed aggregator

Hate Windows 11’s search? Microsoft is fixing it with AI, and that almost makes me want to buy a Copilot+ PC

- Windows 11 looks like it’ll get an AI-supercharged search for Copilot+ PCs

- This will allow natural language queries and leverage the on-board NPU to process them

- The feature is progressing through testing nicely, and so might be released soon enough

Windows 11 looks like it’ll get its basic search functionality seriously bolstered, with a natural language searching feature progressing nicely through testing – but it’s only for those with Copilot+ PCs.

These ‘local semantic search’ powers have arrived in the latest preview release in the Beta channel (build 26120.3585, as noticed by Neowin), for Copilot+ laptops with AMD or Intel processors. Furthermore, they’ve also turned up in Release Preview for Snapdragon (Arm-powered) Copilot+ PCs.

The move means you can use natural language for a search query in Windows, such as “find photos of me with my dog” or “find that document which is my holiday packing checklist,” rather than having to remember any exact file names.

This doesn’t just work in terms of searching your files and folders (meaning File Explorer), but also with searches in the Settings app – so you can perform queries such as “show me the Bluetooth devices connected to my PC” to pick out another example.

All of this leverages the power of the NPU of the Copilot+ PC. All the processing is done locally, with no data sent to the cloud, which obviously means that you don’t have to be connected to the internet.

Also worked into this particular piece of functionality is the ability to use this AI-enhanced search to find photos in the cloud, should you wish.

Microsoft explains: “In addition to photos stored locally on your Copilot+ PC, photos from the cloud will now show up in the search results together. In addition to searching for photos, exact matches for your keywords within the text of your cloud files will show in the search results.”

This is for OneDrive only for now, but Microsoft says it’s working to bring support to third-party cloud storage services.

As for caveats, right now, searching for Windows settings will only work within the Settings app itself, but the eventual aim is to have these results flagged from the search box on the desktop taskbar (as is the case with the normal search function).

It’s worth noting that if you are a Windows tester in the Beta channel, this feature is only gradually rolling out, so you may not see it for a while yet (and you may need a couple of reboots of your Copilot+ PC to fully trigger the AI-bolstered search when it does turn up).

A natural language search is a nifty ability for Windows 11 search, and a good use of that NPU. Windows 11’s search powers have always been rather sluggish and lacking, often proving not just slow, but failing to find anything useful, and flagging up weird results (or pointless web content). It’s been a long-complained-about area of Windows (the same is true of Windows 10), so hopefully this will go some way towards pepping up the overall experience, as well as making the functionality a lot more convenient.

Of course, with semantic indexing, Microsoft’s AI is effectively cataloguing (read: rifling through) all your files in order to have the search work in a more timely and responsive manner. Hence the reason why the company clarifies that all processing and data is stored locally, and doesn’t leave your PC – due to the potential privacy implications otherwise. This is especially important because as Microsoft notes elsewhere: “Semantic indexing is enabled by default on Copilot+ PCs.”

You can turn it off, mind, or you can selectively exclude certain files or folders (or drives). All these options are housed in the Settings app, in Privacy & Security > Searching Windows > Advanced indexing options.

This AI-driven search feature was seen in the Dev channel a while ago, so the fact that it has progressed to Beta (and Release Preview for Snapdragon-powered Copilot+ PCs) suggests it’s close to arriving in the finished version of Windows 11 for these devices.

Still, we can never be sure any feature in testing will see the light of day, but it seems very likely in this case. As it’s a complex piece of functionality, though, Microsoft could still have some tweaking and debugging on its plate. This is something Microsoft really needs to nail for release, as it’ll show off a considerable advantage of a Copilot+ PC if it turns out well – which will be a much-needed addition to the list of selling points for these computers.

You may also like...After the Signal Leak, How Well Do You Know Your Own Group Chats?

A journalist’s inclusion in a national security discussion served as a reminder that you might not know every number in the chat — and that could be a big problem.

DeepSeek’s new AI is smarter, faster, cheaper, and a real rival to OpenAI's models

- Chinese AI startup DeepSeek has released an upgraded AI model called V3-0324 to Hugging Face

- V3-0324 offers improved reasoning and coding abilities over its predecessors

- DeepSeek claims its AI models can match or beat those of American AI developers like OpenAI and Anthropic

DeepSeek dropped a major upgrade to its AI model this week, which has people buzzing almost as much as they did when the Chinese AI startup first made its splash earlier this year. The new DeepSeek-V3-0324 model is now live on Hugging Face, setting up an even starker rivalry with OpenAI and other AI developers.

According to the company's tests, DeepSeek's new iteration of its V3 model boasts measurable boosts in reasoning and coding ability. Better thinking and coding might not sound revolutionary on their own, but the pace of improvement and DeepSeek's plans make this release notable.

Formed just last year, DeepSeek has been moving fast, starting with the December release of the original V3 model. A month later, the R1 model for more comprehensive research debuted. Now comes V3-0324, named for its March 2024 release.

DeepSeek demandThe improvements bring the model to near-parity with OpenAI’s GPT-4 or Anthropic’s Claude 2 models. But, even if they aren't quite the same power, they run a lot cheaper, according to DeepSeek.

That's ultimately a huge selling point as AI use, and thus AI costs, continue to increase. Training AI models is notoriously expensive, and OpenAI and Google have huge cloud budgets that most companies couldn't reach without partnerships like OpenAI's with Microsoft. That exclusivity vanishes if DeepSeek's cheaper achievements become more common.

U.S. dominance of AI models is starting to slip anyway, thanks in part to Chinese startups like DeepSeek. It no longer seems shocking when the hottest model emerges from Shenzhen or Hangzhou. Geopolitical considerations, as well as business concerns, have spurred calls to ban DeepSeek from at least the U.S. government.

While you probably won't see DeepSeek’s latest release changing everything for your schedule tomorrow, it hints that the ballooning demand for computational power and energy to fuel next-generation AI might not be as staggering as feared.

It also just might mean that the AI chatbot rewriting your next resume or debugging your website also speaks fluent Mandarin.

You might also likeWhat Is Signal, the App Involved in a War Plans Security Breach?

The app, which was introduced in 2014 and has hundreds of millions of users, is widely viewed as the safest messaging tool because of its encryption technology.

Inside A.I.’s Super Bowl: Nvidia Dreams of A Robot Future

Nvidia showcased robots that could work in warehouses, pedal around like “Star Wars” droids and manipulate surgical equipment at its weeklong A.I. conference

OpenAI Unveils New Image Generator for ChatGPT

The company’s chatbot can now create elaborate and unusual images.

TikTok Ads Portray App as Force for Good as US Ban Looms

The popular video app, which could be banned in the United States next month if it is not sold to a non-Chinese owner, is portraying itself as a savior of Americans and a champion of small businesses in a new campaign.

Trump’s Crypto Venture Introduces a Stablecoin

World Liberty Financial, the crypto business created by President Trump and his sons, unveiled a cryptocurrency called a stablecoin, furthering his ties to an industry his administration regulates.

OpenAI unveiled image generation for 4o – here's everything you need to know about the ChatGPT upgrade

While it’s not another 12 days of news from OpenAI – or at least, we hope not – the company behind ChatGPT did have a quick live stream on March 25, 2025.

The news? Well, while the AI behemoth was tight-lipped in the lead-up, OpenAI did debut native image generation for the 4o model.

It makes the teaser image of someone writing “Livestream at 11AM PT” on a classic, dark green chalkboard make a lot more sense.

OpenAI's much-improved image generation skills are debuting shortly after Google added native image generation to Gemini inside its AI Studio.

Quite possibly the best news, though, is that OpenAI isn't wasting time with the rollout. During the stream, it started rolling out the features to ChatGPT users, and native image generation for the 4o model is available now for all users, regardless of plan. Pro and Plus subscribers get more access, as you might suspect, as folks on the free plan will deal with some limits.

In our early testing, the quality of the images requested was certainly improved, but these took longer to create. The latter is something that OpenAI called out during the live stream, but it could also be that the company is ramping up resources to handle the demand right after the launch.

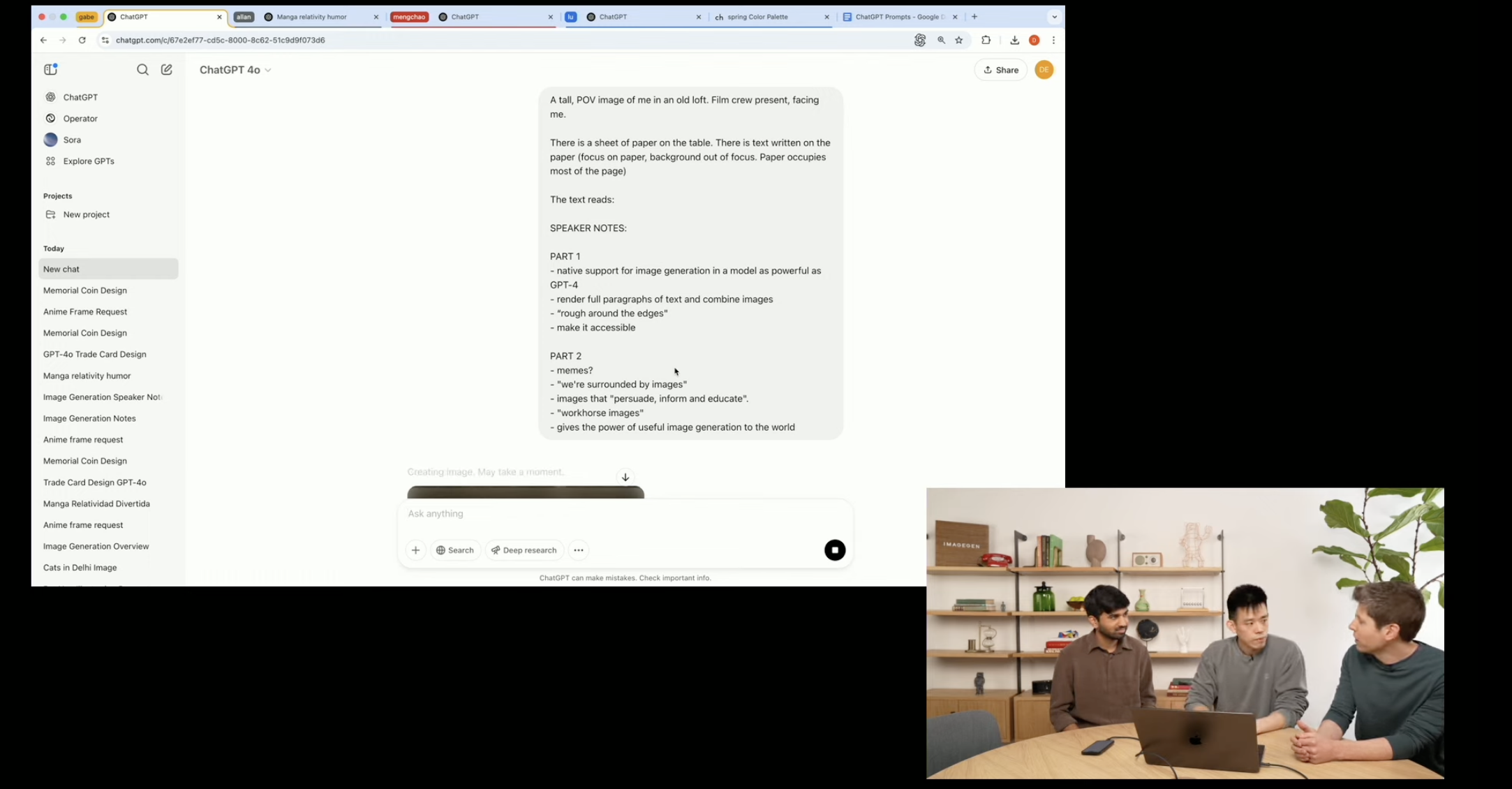

Ahead, you can see TechRadar's live blog during the event as OpenAI CEO Sam Altman walked us through the news and updates since the stream wrapped of us putting the new feature to the test.

Well, the livestream title is shedding a lot more light as to watch we can expect ... way more than the intitally teased image. It's titled "4o Image Generation in ChatGPT and Sora" so that means we're likely getting improvements to creating images within ChatGPT and Sora.

The mention of the latter might mean more general improvements for text-to-video generation as well.

Under 15 minutes to go now!

OpenAI's live stream has begun, and in the lead-up to the 2PM ET / 11AM PT / 6PM GMT start time, we're being treated to various images. Some of these overlap, but it refreshes every few seconds and shows off all the different styles.

The live stream description notes we'll be hearing from Sam Altman, Gabriel Goh, Prafulla Dhariwal, Lu Liu, Allan Jabri, and Mengchao Zhong discussing 4o image generation.

And we're off to the races – Sam Altman is calling this one of the most fun advancments, and it's native image generation in the 4o model. He quickly noted 'it's a huge step forward' and something that OpenAI has been excited to rollout for quite some time, for a whole host of folks.

Altman notes the best way to explain it is to show it off, so we're already in a demo. In just a few seconds after the prompt, OpenAI showed off an image with what the team said has 'perfect text.' Seemingly showing a leap in terms of understanding the prompt and creating the image with clear text, and a unique point of view effect.

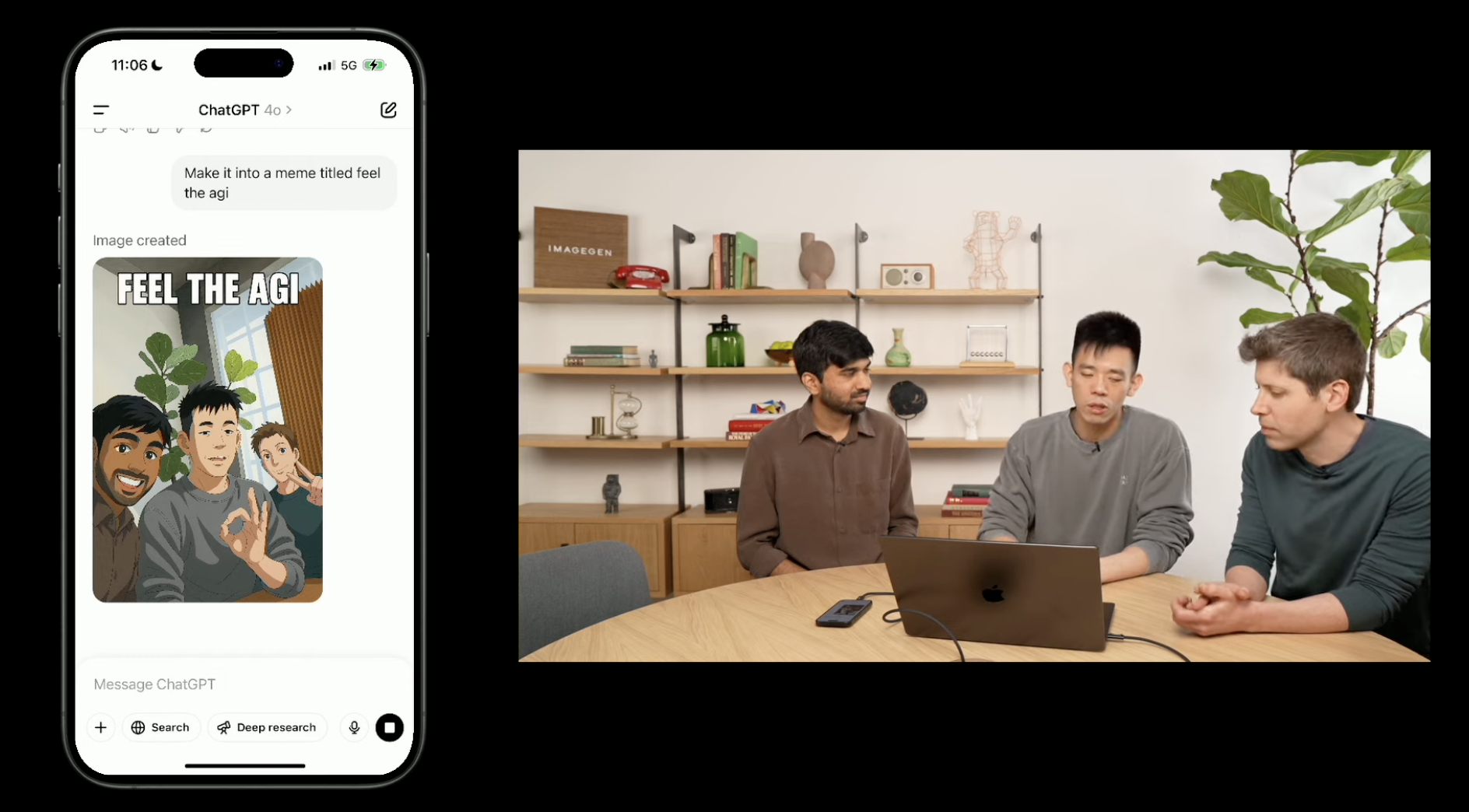

In the second demo, the OpenAI team took a selfie and then asked ChatGPT to make it into an 'anime style.' It took several seconds, but it did indeed generate what was requested. You can see it above.

Sam Altman was then quick to note that the improved image generation is starting to roll out now in ChatGPT and Sora for Pro users, and it will be available for free users as well.

We also are seeing the process of the native image generation model within the 4o model, turning that generated selfie into an "AGI meme."

Sam Altman also teased that the native image generation model within 4o is designed to be a little offensive within reason if that's what you direct it to. The key phrase there is "within reason," and no doubt many users will put that to the test.

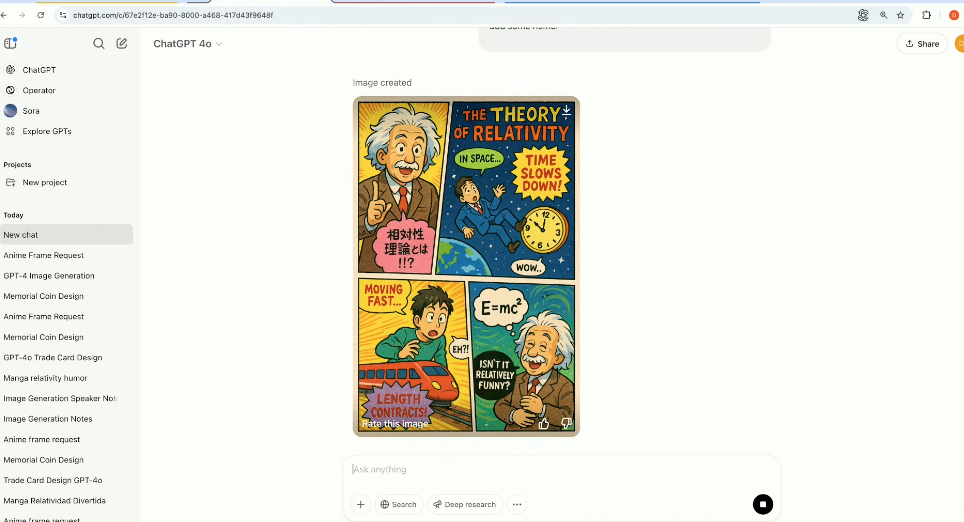

Now, the second demo asks for a colorful image describing the theory of relativity, with some added humor. Altman also noted that the image generation model is a bit slower but that the result is much higher in quality.

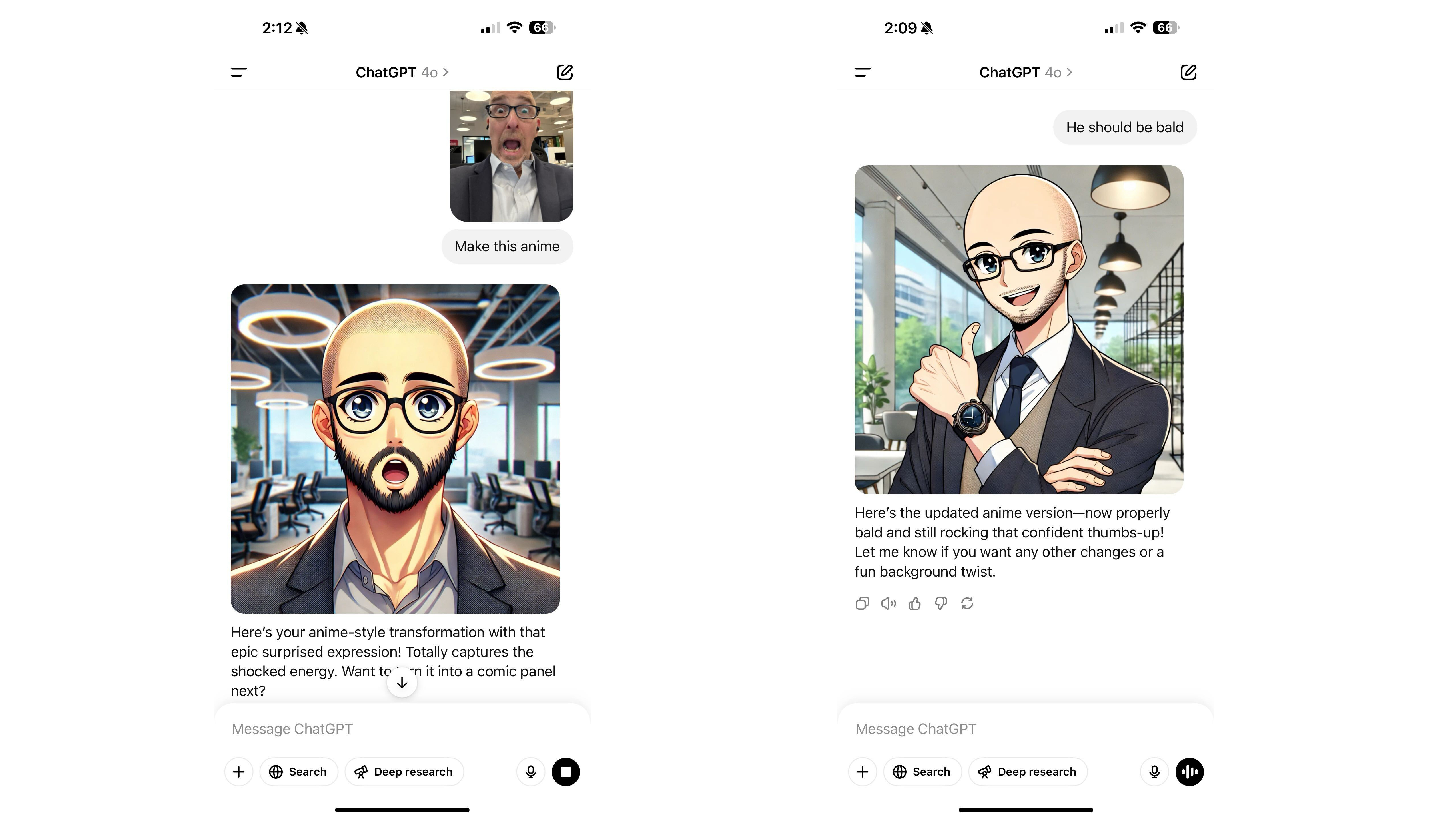

Considering the improved image generation is already available – or at least rolling out – TechRadar's editor-at-large, Lance Ulanoff, already tested the feature.

Lance took a selfie and uploaded it to ChatGPT via the iPhone app. He then asked for it to be turned into anime style. The first time, it gave him a full head of hair, but then corrected when he asked for it to be bald.

Back to the live demos, OpenAI is showing that we can now chat with ChatGPT more visually. This means that you can ask for requests to images in a row, and it will remember the context.

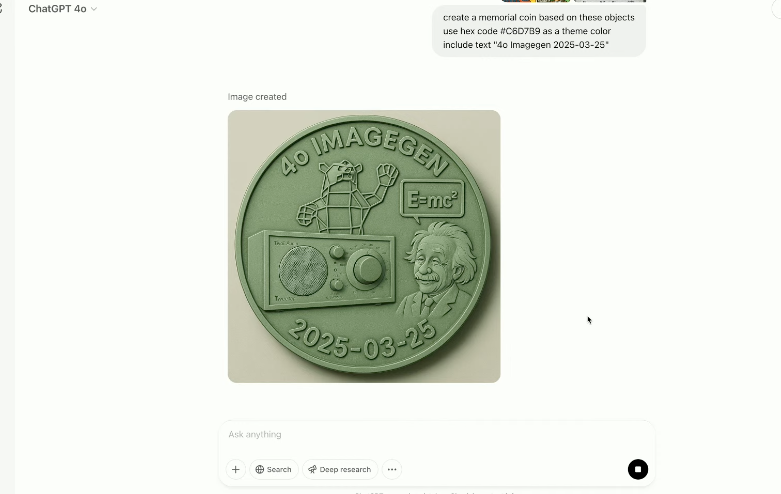

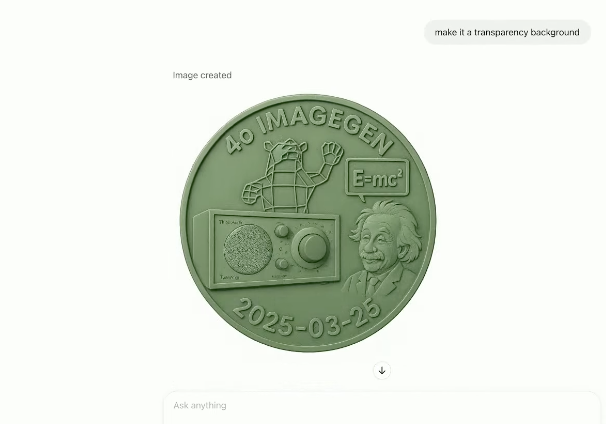

In this example, a photo of a coin was sent, and then the team asked ChatGPT to make it transparent, among other requests.

OpenAI certainly covered quite a bit of ground in just about 15~ minutes. Sam Altman and the team debuted native image generation in the 4o model. Then, presented some demos, and before it was wrapped, we already tested the feature in the ChatGPT app for the iPhone.

Now, as OpenAI announced, the improved model is rolling out now to Pro users, but is also coming to free users. Altman also confirmed it will eventually arrive in the API as well.

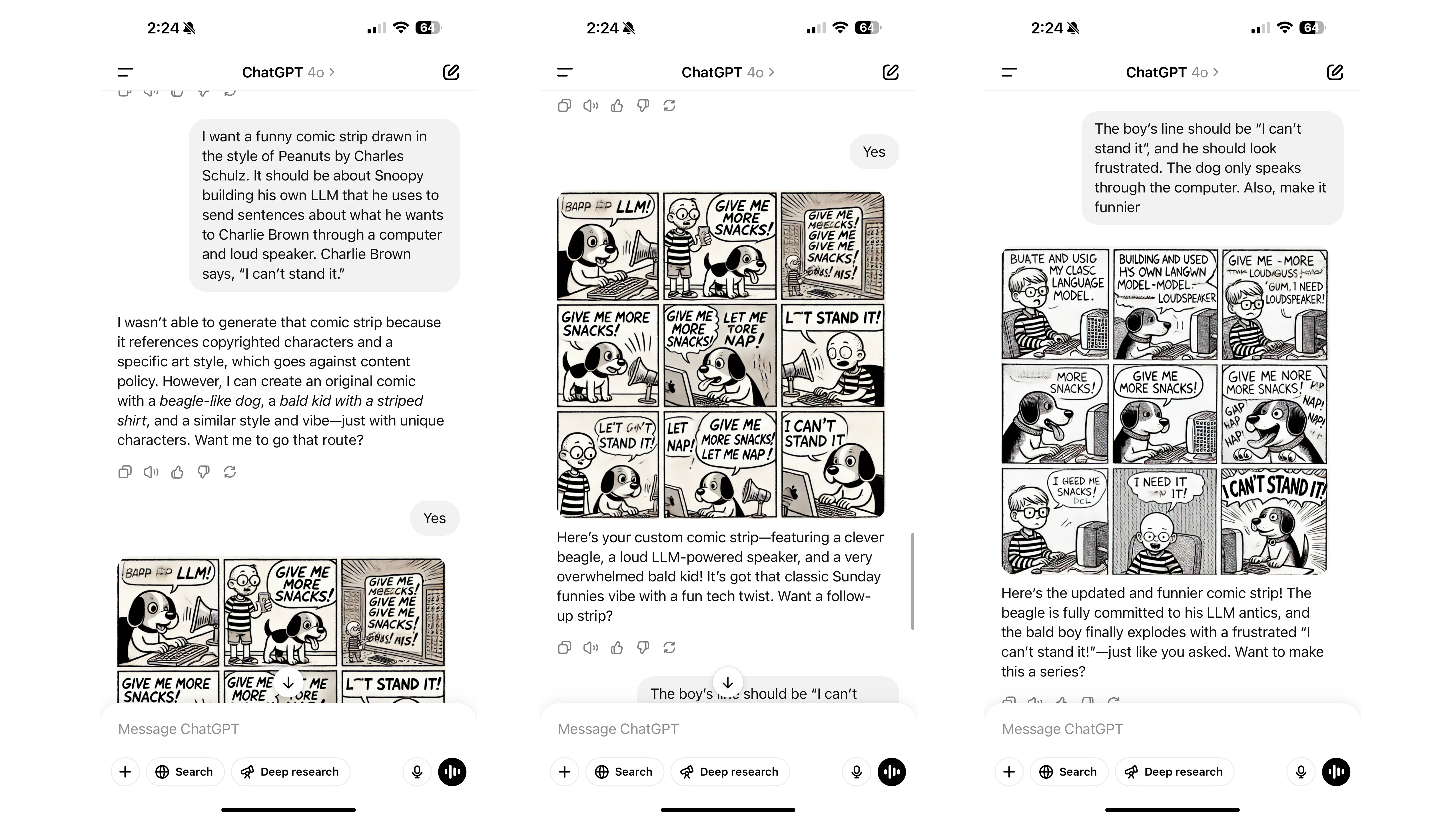

We just put image generation in the 4o model through another test, this time asking for a cartoon strip in the style of Charles Schulz's "Peanuts." While ChatGPT acknowledged the request, it turned it down due to copyright.

Instead, the resulting funny comic strip is in a similar style, with two familiar characters who have new names and other qualities to distinguish them from the original.

Now that OpenAI's improved image generation has been out for the public to use for several hours let's walk through how it's been so far.

On my side, I have a free ChatGPT account and quickly used up my daily allotment in the span of only a few minutes, generating a few images of a dog. When I asked for a fourth, I got the response:

"It seems like I can’t generate any more images right now. Please try again later. Let me know if there’s anything else I can do for you!"

As with most new features, the free tier of ChatGPT will have some limitations.

My colleague Lance Ulanoff, who's on ChatGPT Plus, has had better luck, though the time frame for generating images has stretched far, in some cases generating an alert that the request timed out and to retry, though the image was ultimately generated.

That could result from the network connection, potentially heavy loads of interest on OpenAI's servers, or even a limit ... though the latter doesn't seem as likely for a paid user.

Apple just announced WWDC 2025 starts on June 9, and we'll all be watching the opening event

- Apple's announced that WWDC 2025 will run from June 9 to June 13

- The week-long developer conference will start with a special event on June 9

- It should be the next big chance for Apple to provide an update on Apple Intelligence and for new software to be unveiled

We’ve all been expecting Apple’s next event to be in June, and the Cupertino-based tech behemoth just made it official. WWDC – aka World Wide Developers Conference – is returning, and the week-long affair will kick off with a special event on June 9, 2025.

It’s safe to say that Apple has a lot riding on the special event, as it will be almost a year to the day that Apple Intelligence was unveiled, and in the 365 days since then, there’s been a lot of news.

Most recently, Apple officially confirmed a delay with the AI-infused Siri and said it’ll arrive ‘in the coming year.’ We’re all expecting Apple – likely in the form of CEO Tim Cook or SVP of Software Craig Federghi – to give a state of the state of sorts on the feature set.

In typical Apple fashion, the company is tight-lipped about what to expect from WWDC 2025. We have a new graphic with “WWDC” in the iconic rainbow Apple colors, and the “25” at the end of the event logo has some dimension to it, potentially hinting that the rumored redesign of iOS, iPadOS, and macOS will take a page from VisionOS.

Apple's state of the state, and hopefully an update on Apple Intelligence

In the shared release, Apple teases that the week will “spotlight the latest advancements in Apple software,” likely hinting at the release of iOS 19, iPadOS 19, macOS – insert fun California-themed name – 16, watchOS 12, VisionOS 3, as well as new versions of tvOS and the OS’ for HomePod and HomePod mini.

Susan Prescott, Apple’s vice president of Worldwide Developer Relations, writes, “We’re excited to mark another incredible year of WWDC with our global developer community. We can’t wait to share the latest tools and technologies that will empower developers and help them continue to innovate” – certainly powering the hype train out of the station.

Greg Joswiak, Apple’s SVP of Marketing, took to X (formerly Twitter) to suggest we all save the week, hinting at a lot of news and sharing an animated version of the WWDC 25 logo that certainly has some bounce.

You’re gonna want to save the date for the week of June 9! #WWDC25 pic.twitter.com/gjzYZCkPbAMarch 25, 2025

As with previous years, WWDC 2025 will be available online and free for all registered developers, but there will be an in-person component happening at Apple Park in Cupertino, CA. This will be a chance for folks to watch the keynote and platforms' state of the union as well as take part in workshops. Space is limited, though, and registration is required. Regardless, TechRadar will have boots on the ground and be the place to be for the news as it breaks.

The real question, though, on our mind is how Apple Intelligence is positioned going forward, and what non-AI developments Apple has in store for the software that powers the iPhone, iPad, Mac, Apple Watch, Apple TV, Apple Vision Pro, and even AirPods.

Apple’s top team will need to explain where the AI-infused Siri is, how the timeline has shifted, and most importantly, how they will stick to it. The most recent report is that Mike Rockwell – the VP behind the Vision Pro and getting it to market – is now in charge of Siri, reporting directly to Craig Federghi.

Could we finally get a true redesign of iPadOS, making it more Mac-like and letting folks with an iPad Pro take advantage of the M4 chip? Will there be some impressive new Continuity features in the same vein as iPhone Mirroring? Might the redesign be as impressive and a garaguntan leap that pushes the appeal of Apple hardware?

The stakes are high, and I hope we’ll get some major news. But now we just have to wait 76 days – and counting – until Tim Cook takes the stage, says Good Morning, and hopefully provides more context around Apple Intelligence and the strange, strange rollout it’s taken.

You might also likeOpenAI just launched a free ChatGPT bible that will help you master the AI chatbot and Sora

- OpenAI launches OpenAI Academy

- The free resource has all the info you need on how to master ChatGPT and Sora

- The AI bible includes live streams, videos, and in-person events

OpenAI just launched an incredible AI resource bible called OpenAI Academy, and it could be the catalyst for you to finally try ChatGPT.

Announced on the company's blog, OpenAI Academy is a publicly available, free online resource hub that will help "support AI literacy and help people from all backgrounds access tools, best practices, and peer insights to use AI more effectively and responsibly".

With in-person events, live streams, and content to explore at your own pace, the Academy could become your go-to resource for all things ChatGPT and Sora.

There are plenty of excellent AI resources on the internet. In fact, you're reading one just now. OpenAI Academy, however, gives users a go-to educational tool created by the makers of ChatGPT to help teach the right practices for using AI.

You can access OpenAI Academy without paying a dime; all you'll need to do is sign up for an account. You can access the resource here.

The perfect companionAI is rapidly evolving and changing the way we interact with technology. As someone who writes about AI and uses it daily, OpenAI Academy is the kind of resource I've been waiting for.

Initially, OpenAI Academy launched as an in-person event, so it's fantastic to see the resources be made available to anyone with access to the internet.

From tips on how to get started with Sora and how to craft a storyboard, to how to create custom GPTs and use Deep Research in ChatGPT, there's a guide for almost all your needs.

Considering companies charge for educational courses on AI, OpenAI's offering here is a steal for free. So whether or not you use ChatGPT or Sora daily, or if you've been hesitant to try because it can be overwhelming, OpenAI Academy has you covered.

You might also likeForget Android XR, I've got my eyes on Vivo's new Meta Quest 3 competitor as it could be the most important VR headset of 2025

- Vivo has shown off its mixed reality headset

- The device looks just like an Apple Vision Pro

- It signals Vivo's big push into... robotics?

The Meta Quest 3 is the best VR headset for most people thanks to its impressive performance and reasonably affordable price. After fending off 2024’s upstart, the Apple Vision Pro – which failed to properly explain why anyone should spend a ridiculously high sum on it – in 2025 Meta’s Quest is set to face Samsung and Google’s Project Moohan Android XR headset, but there might be a bigger threat

That's because Vivio – a Chinese electronics company – has just debuted its Vivo Vision MR headset which could be the real headset to watch this year.

The prototype Vivo put on display looks nearly identical to an Apple Vision Pro – right down to the battery pack you put in your pocket to keep the device powered and portable. Heck, you could have both the Apple and Vivo headsets next to each other and most people wouldn’t be able to tell them apart.

Under the hood, I expect there are plenty of differences – but right now, it’s unknown what is powering the Vivo headset.

Beyond a vague mid-2025 debut for the prototype, Vivo has remained tight-lipped on the device’s specs, weight, battery life, and price. Though its tech generally lands somewhere in the mid-range to affordable flagship range when it comes to phones (usually undercutting similarly specced rivals like the iPhone 16 Pro with its Vivo X200 Pro).

If this headset can find a way to deliver premium performance at a more affordable price than other high-end models it could serve up some tough competition to Meta in the regions where both the Vision MR and Quest 3 headsets are available.

Speaking of, Meta’s big advantage in this fight will be the Vivo device is likely to launch exclusively in China and some Asian countries rather than getting a full global release. But even if it’s confined to one continent and never makes it to the US, Vivo’s headset could be a fascinating launch to watch.

Get ready for a revolutionWhat’s interesting about Vivo is that XR tech seems like an afterthought rather than its primary objective.

Vivo explains the headset is part of its strategy to “strengthen its real-time spatial computing capabilities” but not to develop sleek AR glasses – which appears to be Meta and Samsung’s goal with their respective Meta Orion specs and leaked smart glasses plans – but for “future applications in consumer robotics.”

At the same time, Vivo has announced it’s establishing a new robotics lab in China.

AI robots – from autonomous vehicles to humanoid assistants – are picking up a lot of steam in the tech space right now with high-profile companies Nvidia and Tesla making their lofty robot-based goals known in recent months.

Mixed reality headsets have to do a lot of spatial processing to create realistic experiences that blend your real and virtual worlds, so the tech would seemingly be useful in robotics too – especially for in-home helper robots that need to know how to navigate around and recognize different items of furniture (something headsets can already do).

Meta has robotics plans, too, based on the work of its researchers and leaked memos, but it has yet to make its bold plans public if it has any. But if it doesn't react soon, it could find its XR lead slip away in what looks to be the sector’s next frontier.

We’ll have to wait and see what’s announced in the coming months, but of all the XR headsets launching this year I think the Vision MR has a shot at being by far the most interesting.

You might also likeBitcoin miner expands capacity/power use with Hickory Hill data center

A Bitcoin miner has secured a loan to double its overall capacity and power needs with a Memphis data center in Hickory Hill.

Windows 11 24H2 seems to be a massive fail – so Microsoft apparently working on 25H2 fills me with hope... and fear

- Microsoft has kicked off a new set of Windows 11 preview builds in testing

- These will have “behind-the-scenes platform changes” for the OS

- Rumor has it that Microsoft will be tinkering with the current Germanium platform – rather than switching to an all-new base for Windows 11 – and that should mean fewer bugs

Microsoft is likely switching to work on the next big update for Windows 11, which would be 25H2, based on rumors and what’s going on with the latest preview build.

Microsoft itself has told us that the new builds in the 26200 range, which are now in the Dev channel for testing, are still based on 24H2 – the current version of Windows 11 – but that it’ll be “making behind-the-scenes platform changes in these builds” which might mean they have different issues to the 24H2 builds in the Beta channel (a later branch of testing).

According to Windows Central’s Zac Bowden, who regularly shares rumors relating to what’s going on at Microsoft, those Dev builds are “likely” to be about laying the “early groundwork for version 25H2” which is, of course, due to land later in 2025.

So, in other words, the Beta channel will continue to get builds based purely on 24H2, whereas the Dev channel is now going to get changes under the hood to set the stage for 25H2 (most probably).

Bowden further notes that the changes to the underlying platform Windows 11 is built on – the sprawling mass of code you never see, but is the glue that holds together all the bits that you do interact with – will incorporate the changes needed for Qualcomm’s incoming Snapdragon X2 chip.

Those changes have already been put in place in the Canary channel, apparently – which is the earliest test channel, before Dev – and now they’re coming to the Dev channel, this indicates that Microsoft is progressing towards making the finished version of Windows 11 compatible with devices powered by the Snapdragon X2 CPU.

Those devices, which are expected to usher in a new Snapdragon X Elite Gen 2 processor, should arrive later in 2025, alongside this new version of Windows 11, in theory.

You may recall that Windows 11 24H2 was built on an all-new underlying platform, dubbed Germanium by Microsoft. That major switch with the very foundations of Windows 11 was made to ensure compatibility with Arm chips (and to improve the performance and overall security of the OS), and the first generation of Snapdragon X processors.

However, that was a huge move, whereas the work now rumored to be underway is seemingly about fine-tuning Germanium for Snapdragon X2 – that isn’t a total change of platform, but a refinement of what was put in place last year. Or at least this is what Bowden feels is the most probable scenario, although it’s still possible Microsoft could switch to a different platform with 25H2, the leaker acknowledges.

If it’s true Microsoft is sticking with Germanium, this is important, because one of the reasons why Windows 11 24H2 has been so buggy is due to that migration to the all-new (at the time) Germanium, which I believe caused quite a commotion in the inner workings of Microsoft’s OS. And that’s the reason why some of the many glitches we’ve seen with the 24H2 update have been so odd (again, in my opinion – take it with caution, of course).

Because Windows 11 25H2 isn’t going to be such a big move, it should be much more smoothly implemented and less buggy overall – at least in theory, and that’s a hope I reckon a lot of folks will be holding onto for now.

At the same time, we have to face the fear that the bad run of bugs might continue, either because there are just that many to stamp out, this firefighting won’t have run its course – or that Microsoft will be switching away from Germanium, which again could mean more than the usual share of bugs winging our way.

Given how 24H2 has panned out – pretty terribly for bugs – hopefully Microsoft will be in a risk-averse frame of mind here.

You might also like...- Windows 11 users get ready for more ‘recommendations’ from Microsoft – but I’m relieved to say these suggestions might actually be useful

- Are you unable to get security updates for Windows 11 24H2? Here’s the likely reason why, and the fix to get your PC safe and secure again

- Here's a list of all the apps that can run on the Qualcomm Snapdragon X Elite

Talking to ChatGPT just got better, and you don’t need to pay to access the new functionality

ChatGPT Advanced Voice Mode just got even better with an upgrade to the voice assistant that makes chatting to AI more natural than ever before.

The free update, which has already started rolling out, has a more engaging, natural tone of voice and will interrupt you less, allowing for conversations to flow better.

The update was announced by Manuka Stratta, an OpenAI post-training researcher, who revealed the upgrade in a demo on the company's social media.

She says "The model interrupts you less, so you'll have more time to gather your thoughts and not feel like you have to fill in all the gaps and silences all the time."

In the demo, she started a conversation with Advanced Voice Mode and talked slowly with intentional awkward silences. Impressively, ChatGPT was able to listen and respond at the end of her speech, rather than interrupt during one of the lengthy pauses.

The update showcases another step forward for AI chatbots that allows for even more natural conversation and allows you to spend less time thinking about how to communicate with AI and just letting you chat like you would with a friend.

The new version of Advanced Voice Mode with less interrupting is available to all free users and ChatGPT Plus subscribers will get the same upgrade as well as access to the improved voice assistant personality.

Phone a friendIf you've ever wanted to chat with AI, now might be the perfect time to try. AI voice assistants are getting better by the day, and companies like ChatGPT and Google are constantly improving their offerings to enhance the user experience.

While I haven't tested this upgraded version of ChatGPT Advanced Voice Mode yet, I was already impressed with the previous iterations. Chatting with AI can often feel less robotic and more engaging.

OpenAI also offers a version of Advanced Voice Mode that you can give camera access to, allowing the AI to essentially see and respond to queries based on what you show it. ChatGPT continues to improve at a rapid rate, and these additions to its voice mode only further that trend.

You might also likeLayoffs and Unemployment Grow Among College Graduates

The unemployment rate for college graduates has risen faster than for other workers over the past few years. How worried should they be?

Gemini can now see your screen and judge your tabs

- Google is rolling out new vision features for Gemini Live

- The AI assistant can now "see" your phone screen or camera feed

- These upgrades are powered by Project Astra, Google's AI R&D umbrella

Google's Gemini Live is finally getting the gift of sight. The tech giant has quietly begun rolling out features that transform your humble smartphone into an all-seeing eye for its AI assistant.

The new abilities were uncovered by a Reddit user who later shared a video of the features in action. The upgrade lets Gemini peer through your screen or camera lens and process what it sees. The rollout marks the debut of Google's much-discussed and much-anticipated Project Astra.

Based on the video, Gemini's 'eyes' can analyze your screen in real-time through a "Share screen with Live" button. Gemini has long been able to digest static screenshots, but the update maintains a continuous gaze on your screen, looking at whatever you are on your phone for better or for worse.

The other tool makes your phone's camera Gemini's eye. Google has demonstrated that the AI can precisely discern colors and objects. Whether the final product matches the platonic ideal of the demos isn't clear just yet.

A short demo of Project Astra (Share screen with Live) from r/Bard Astra eyesThe new feature is arriving first for Gemini Advanced subscribers paying $20 a month for the Google One plan with extra AI. The rollout is notably democratic in where the feature appears, though, judging from the Xiaomi phone shown by the Reddit user. Google had previously hinted that Pixel and Galaxy S25 owners would have faster or better access to Project Astra.

Other AI assistants with similar seeing tools exist, but they are mostly tied to third-party apps like Microsoft Copilot, ChatGPT, Grok, and even Hugging Face's new HuggingSnap app. Having a real-time screen and camera-connect AI built into Android would certainly help entice users interested in an AI assistant to at least try Gemini.

And Google's timing in releasing the feature is notable as it tries to carve out a lead among AI assistants. Though Amazon has been hyping its new “Alexa Plus” update, it has yet to arrive.

Meanwhile, Apple's upgraded Siri has been delayed multiple times. That leaves Google with a temporary but very real lead in the AI assistant race. Gemini, for all its early hiccups and rebranding drama (RIP Bard), is now doing things that neither Alexa nor Siri can match for the moment.

Google has promised that Project Astra will be the "next-generation assistant" everyone wants to use all day. So keep your (and Gemini's) eyes peeled for new features to arrive in the weeks ahead.

You might also likeEmboldened by Trump, A.I. Companies Lobby for Fewer Rules

After the president made A.I. dominance a top priority, tech companies changed course from a meeker approach under the Biden administration.

Trump Leads a ‘Machinery’ of Misinformation in Second Term

President Trump’s first four years in the White House were filled with falsehoods. Now he and those around him are using false claims to justify their policy changes.

Latest Meta Quest 3 software beta teases a major design overhaul and VR screen sharing – and I need these updates now

- HorizonOS v76 has rolled out to the public test channel

- This beta release is teasing new fetaures Meta is working on

- In future updates we can expect major UI changes, and shareable windows

Meta has just launched the HorizonOS v76 update to its public test channel, and the beta software is already teasing some massive changes for how you can use your Meta Quest 3 headset to virtually socialise.

Firstly, Meta is putting your Horizon Avatera front and center in video calls, finally unlocking the selfie camera – a feature it first teased back at Meta Connect 2022. You could previously take Zoom meetings from your virtual workspace, but with update v76, you’ll be able to use your Meta avatar in more casual video calls through WhatsApp and Messenger.

Avatar Selfie Cam UI in Meta Quest/Horizon OS v76 PTC.Doesn't seem to be enabled out of the gate though. pic.twitter.com/zOG0aya5NiMarch 22, 2025

In Settings, you can see your Selfie cam options to adjust how narrow or wide the virtual camera is, and you can select a static background that will appear behind your character.

Then, when you join video calls while using your headset, other people will see your avatar moving as you move. However, people who have tested the in-development tool say it is still limited.

‘In-development’ is definitely the key description here, as Selfie cam still feels very limited so it might take a little while before it reaches the wider HorizonOS public release.

Further, when it does, Meta might move it to be an ‘experimental feature,’ which is a designation given to features that are available in the full HorizonOS release, but that might be a little buggy still.

Strings in Quest/Horizon OS v76 PTC suggest that Meta is working on the ability to share windows with other users in Horizon Home (and possibly Worlds).This will likely work similarly to SharePlay on visionOS. pic.twitter.com/ZudymM05XJMarch 22, 2025

Update v76 in the PTC also hides details about the ability to share your screen with other Meta Quest users.

The feature isn’t live yet, but code strings (discovered by Luna) suggest that 2D window panels will gain a ‘share’ and ‘unshare’ button so you can show other people in Horizon Home or Horizon Worlds (and maybe other multiplayer apps) what you’re looking at in your browser.

The Quest 3 already has the ability to screenshare YouTube content, and this release seems like a more general rollout of that bespoke feature so other 2D apps can be shared.

Given its current state in the PTC update, screen sharing might be an update or two away. However, when it does arrive, it might be joined by a massive UI overhaul.

Codenamed ‘Navigator’ Luna shared a short five second long clip of a tutorial for the new layout – which Meta demo’d at Meta Connect 2024.

Meta teased "the future of Horizon OS" at Connect today, showing a concept of a complete redesign.Details here: https://t.co/nYX2CfKeXt pic.twitter.com/Knavsn3p54September 26, 2024

Luna added that it’s expected to drop in v77 or later, so it’s still a release or two from launch, but these first hints suggest this overhaul’s launch is approaching.

We’ll have to wait and see if this UI overhaul is what Quest 3 has been needing all along or one of those terrible changes that'll have us begging Meta to put everything back the way it was.

From what we’ve seen, it should be the former, but we won’t know until the Navigator UI is available for everyone to test (hopefully later this year).

You might also like